Kafka

Get started⚓︎

Hydrolix Projects and Tables can continuously ingest data from one or more Kafka - based streaming sources.

Kafka source configuration is completed using the KafkaSource model or through the Web UI.

👍 The basic steps are:

- Create a Project/Table

- Create a Transform

- Configure the Kafka Source and Scale.

These instructions assume that the project, table and transform are all already configured. More information on how to set these up can be found here - Projects & Tables, Write Transforms.

Set up a Kafka source through the API⚓︎

To create, update, or view a table's Kafka sources through the Hydrolix API, use the Create Kafka sources endpoint. Note that you will need the IDs of your target organization, project, and table in order to address that endpoint's full path.

Send a JSON document describing the connection between your Hydrolix table and the Kafka data streams.

For example, the following JSON document set up a connection between a pair of Kafka sources running at the domain example.com and the Hydrolix table my-project.my-table.

Example⚓︎

Configuration properties⚓︎

The JSON document describing a Kafka-based data source requires the following properties:

| Property | Purpose |

|---|---|

name |

A name for this data source. Must be unique within the target table's organization. |

type |

The type of ingestion. Pull only supported at this time. |

subtype |

The literal value "kafka". |

transform |

The name of the transform to apply to this ingestion. |

table |

The Hydrolix project and table to ingest into, expressed in the format "PROJECT.TABLE". |

settings |

The settings to use for this particular Kafka source. |

pool_name |

The name that Hydrolix will assign to the ingest pool. |

k8s_deployment |

Only used for Kubernetes deployments, describes the replicas, memory and CPU and service to be used in the Kafka ingest pool. "k8s_deployment":{<br> "cpu": 1,<br> "replicas": 1,<br> "service": "kafka-peer"<br> } |

The Settingsobject⚓︎

The settings property contains a JSON object that defines the Kafka servers and topics this table should receive events from.

| Element | Description |

|---|---|

bootstrap_servers |

Array of Kafka bootstrap server addresses, in "HOST:PORT" format |

credential |

Name of an authentication credential, mutually exclusive with credential_id |

credential_id |

UUID of an authentication credential, mutually exclusive with credential name |

topics |

Array of Kafka topics to import from the given servers |

When authenticating with a Kafka server, client certificate authentication and SASL/PLAIN can be used together or independently. Match the configuration of the Hydrolix Kafka client to the server configuration.

Set up a Kafka source through the UI⚓︎

To create a Kafka source, click the Add New button in the upper right of the UI and select Table Source. You will be redirected to a New Ingest Source form at https://YOUR-HYDROLIX-HOST.hydrolix.live/data/new-ingest-source/.

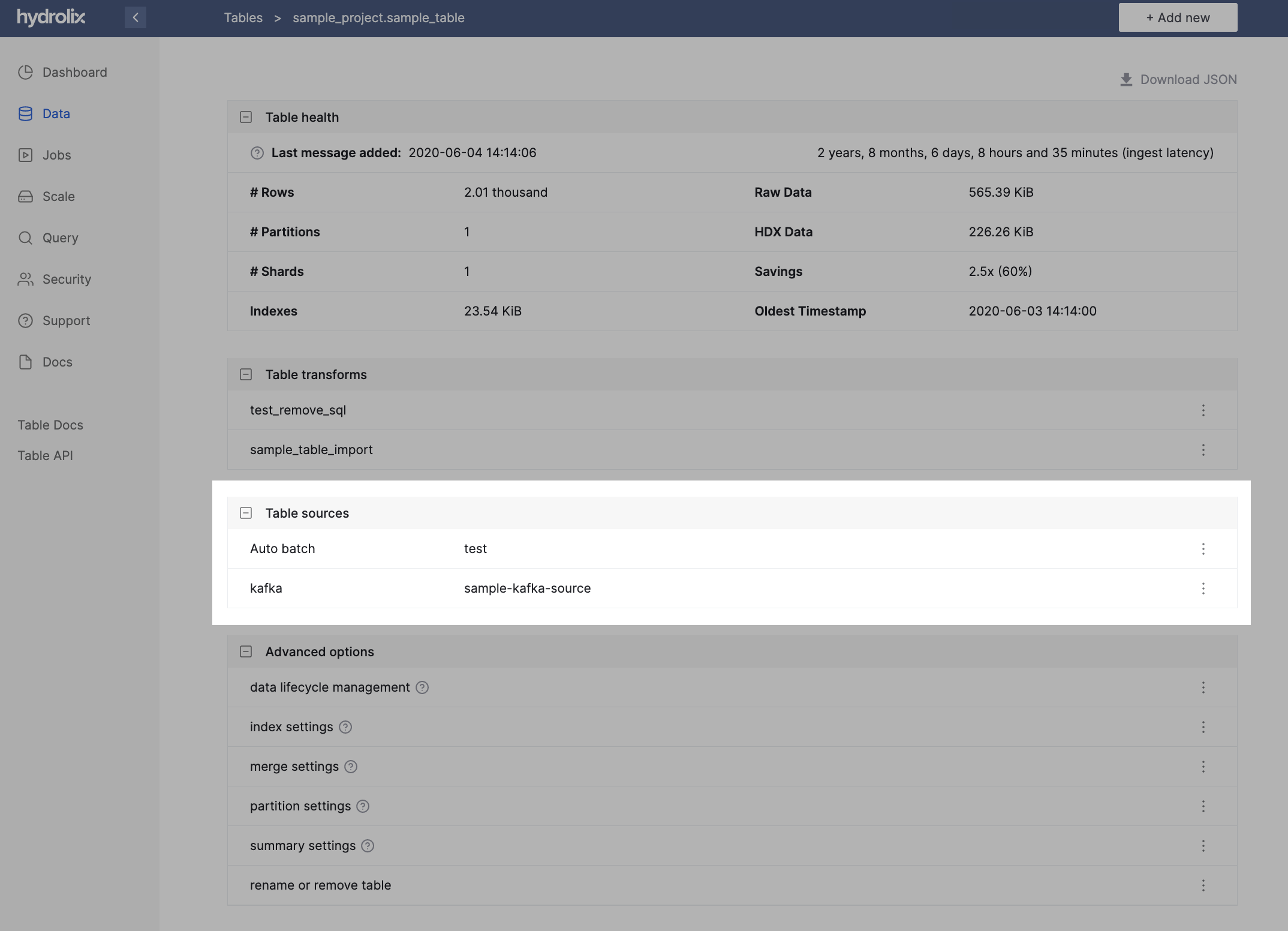

To manage your stack's Kafka sources through its web UI, visit an individual table at https://YOUR-HYDROLIX-HOST.hydrolix.live/data/tables/<table_id> in your web browser and select a source from the Table Sources widget.

Authenticate using SASL/PLAIN⚓︎

Some Kafka servers require clients to authenticate at application layer using SASL/PLAIN, a Simple Authentication and Security Layer (SASL) mechanism.

To authenticate to a Kafka server using SASL/PLAIN:

- Create a credential of type

kafka_sasl_plain - Specify that credential ID in the Kafka data source

settings.credential_idattribute

Use the API to create the Kafka data source and specify the credential_id.

As of v5.9, prefer the API

The Hydrolix UI lacks support for the credential_id to communicate the kafka_sasl_plain credential ID. If the data source exists already, use PATCH Kafka source.

The API supports the credential_id field on all Kafka data source endpoints.

Authenticate over TLS to Kafka server⚓︎

Some Kafka servers support mutual TLS and require the client to present an identifying certificate for authentication at the network transport layer. Often, a private certification authority (CA) is in use, as well.

- Mutual TLS authentication: Install the certificate and corresponding key in Kubernetes Secret keys named

KAFKA_TLS_CERTandKAFKA_TLS_KEY. This certificate identifies the client. - Publicly-signed server certificate: The Kafka server in this case is using a publicly trusted certificate. The client uses public certificate trust stores to verify the server. No additional configuration is necessary.

- Privately-signed server certificate: The Kafka server in this case is using a certificate issued from a private CA. Install the trusted, private root CA certificate in a Kubernetes Secret key named

KAFKA_TLS_CA. The Kafka client checks that the server cert matches this private CA cert.

Private CAs and client certificates

The combination of the private key and the certificate identify the client to the server. These can be issued by a private Certification Authority (CA).

When connecting to servers using certificates signed by private CAs, you must configure the private, trusted root certificate for TLS connections to succeed.

Frequently, a Kafka installation will use a private CA for the server certificate and client certificates issued to known applications.

Use a Kubernetes curated secret with the following options:

| Option | Expected Value |

|---|---|

KAFKA_TLS_CA |

A TLS certificate authority file, in PEM format. |

KAFKA_TLS_CERT |

A TLS certificate file, in PEM format. |

KAFKA_TLS_KEY |

A TLS Key file, in PEM format. |

For example:

To set the secret:

Convert Kafka Java keystore certificates to PEM format⚓︎

By default, Kafka stores its certificate and key information in a Java KeyStore (.jks) file. Hydrolix requires the files in Privacy Enhanced Mail (PEM) format.

Use keytool to export the key and certificate files.

Use openssl to convert to the PEM format.

How-to export certificate files⚓︎

-

List certificates present in your keystore:

-

Locate the CA certificate file, which is

carootin this example, and export it: -

Use

opensslto transform it into PEM format: -

Follow the same steps for your TLS certificate file

clientcert:

How-to export key files⚓︎

Exporting a key from the Java keystore requires a different set of commands.

-

Use

keytoolto create a new PKCS12 store: -

Use

opensslto extract the private key in PEM format, and usesedto remove extra information:

At this point, you should have the three PEM files you need to update your Hydrolix cluster with your Kafka TLS information.

Scaling⚓︎

To scale the Kubernetes deployment for the ingest pool used by this source, including the number of replicas, memory, and CPU, see the scaling resource pools page.